Amped Software has its roots in academic research. The first prototype of Amped FIVE actually started with my master’s thesis project in Electronic Engineering. In this article, I want to talk a bit about the relationship between Amped Software and science, the scientific method, and scientific research. As the title suggests, we’ll discuss what science gives to Amped and what Amped is giving back to scientific fields such as multimedia forensics, forensic video analysis, and forensic image and video enhancement. Curious? Keep reading!

Justice Through Science

Amped’s vision is Justice through science, so science means a lot to us. It means we want to act according to the scientific method in our product development cycle. It also means that we love to make research. This blog post is about both things. First, it shows how research plays a role in our product development process. Then, it presents some of the topics we’ve been actively making research on in the last years.

Product Development and the Scientific Method

Product Development

When we start implementing something new, we make sure it is based on algorithms that are well established in the scientific community. There must be a solid scientific article (benignly abbreviated with “paper”) behind it, or perhaps a book chapter, as it happens for more basic tools.

Our R&D team then reviews the paper. They carefully check which assumptions it makes and whether they have some chance of being satisfied in real-world scenarios. This is a bit of a sore point sometimes. The “publish or perish” rule may lead a researcher to invent and test a new method that only works in extremely narrow conditions. So it’s good for publishing but hardly useful in reality. As an example, a very large part of image forgery detection methods in literature work only on uncompressed images. It is a totally unrealistic assumption in real-world conditions.

The next step is analyzing the experimental validation presented by the authors. If the tool looks promising, we move on to developing a prototype and repeating the validation on an independent and heterogenous test dataset. And this is another sore point. We’ve found tools that looked so promising in the experiments reported by the author. However, they proved way less reliable when tested independently. That’s why we always need to redo the validation ourselves. We thus measure the performance using well-known tools such as Receiver Operating Characteristic curves, average accuracy, confusion matrices, F-scores, etc. If everything goes well, we have a good candidate for inclusion in our software.

Scientific Method

But the scientific approach is not only part of algorithms selection and testing. It must also be part of the presentation stage, so as to guarantee repeatability and reproducibility. As our users know very well, the reports generated by Amped’s products always provide the complete list of parameters set by the user, and a bibliographic reference for each enhancement, restoration, or authentication filter that was used.

Okay, all of the above was about how we use science to guide our development. Let’s now turn to the second point: how we contribute to science!

What Our Research Team Works On

For a company that lives on the bleeding edge of technology, it is obviously essential to keep up to date with the most recent academic results. But we don’t like being just spectators. We want to play the game, discover and learn new things. Active research is also a great way to stay up to date.

In the last few years, Amped has been investing considerable time in research, and not just aiming at the “low-hanging fruits”. We had collaborations with universities through master theses and internships and direct involvement of Amped employees in research activities. The lovely part is that very often people keep working with us as Amped employees after they finish their studies.

Here are some of the topics we’ve been working on, in reverse chronological order.

PRNU-based Source Camera Identification Reliability on Modern Devices

PRNU Analysis

Those in the image forensics field probably know what PRNU analysis is. PRNU is the acronym for Photo Response Non-Uniformity, which is responsible for a specific kind of noise affecting digital images. PRNU noise has been shown to be unique to each imaging sensor. Therefore, by comparing an image’s PRNU noise against the reference noise pattern computed for a camera, we may be able to establish a strong link between the camera and the image. Just like ballistics analysis do with a bullet and the gun that fired it (indeed, PRNU analysis’s alternative name is “image ballistics”). According to an extremely vast and sound scientific literature, the false positive rate of PRNU source identification is rather low when the analysis is conducted properly.

Experiments with PRNU Analysis at University of Florence

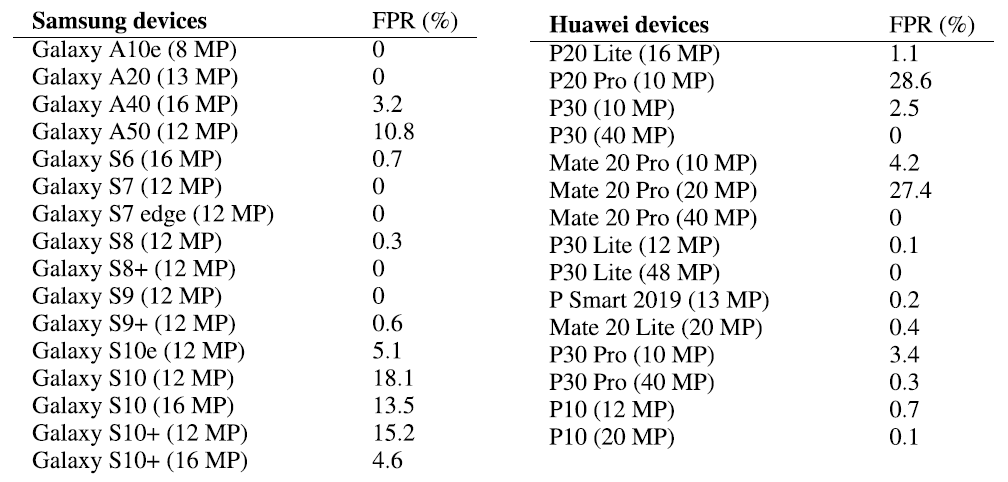

Now, in 2020 a master’s student under the supervision of prof. Alessandro Piva, at the University of Florence, was carrying out some experiments with PRNU analysis on Samsung smartphones. A surprising amount of false positives showed up! Amped has a very strong link with prof. Piva’s group, so we got in touch and decided to broaden the experiment. We downloaded over 33.000 images from 372 different smartphone exemplars and 114 DSLR cameras. We carried out a standard PRNU analysis, using the implementation made available by PRNU analysis inventors.

Then, we discovered that, for several recent smartphone models, the false positive rate was exceedingly high. To cite some, the Samsung Galaxy S10’s false positive rate (FPR) was well above 13%. The Huawei P20 Pro was above 28%, under classical testing conditions.

Breakthrough Discovery

We believed this to be a breakthrough discovery, since PRNU analysis is considered very reliable and thus regularly employed by many Law Enforcement Agencies. We thus presented the experiments and results in a scientific paper that went through a thorough and useful review process and is now publicly available to anyone: A Leak in PRNU Based Source Identification—Questioning Fingerprint Uniqueness. To spread the news through our users, we also published an article on this blog. So if your unit has to work on PRNU analysis, we recommend that you take a look at the paper. Wen suggest you consider a dedicated validation for the specific device model, before working with the real evidence images.

Perspective Stabilization and Super Resolution

This is a success story we are so proud of. It started a few years ago. One of the most common feedback from our users was that despite Amped FIVE allowed aligning the perspective of a moving license plate manually (with the Perspective Registration filter), the process was very time-consuming and sensitive to small user errors. The demand for forensic enhancement software that would automate the perspective alignment of a license plate (or other flat surfaces, such as a smartphone’s screen) was very strong.

Perspective alignment is considered an easy task in computer vision. However, that works when you have large objects, which provide many “keypoints” that you can match and align between neighboring frames. But if you try using standard methods to align a 6-by-3 rectangle of pixels in a blurred frame, you’ll be very disappointed. Perspective alignment in such a corner-case scenario was a real challenge. Luckily, our Gabriele Guarnieri, Ph.D., had both the mathematical skills and the crystalline engineer’s passion for dealing with such a challenge.

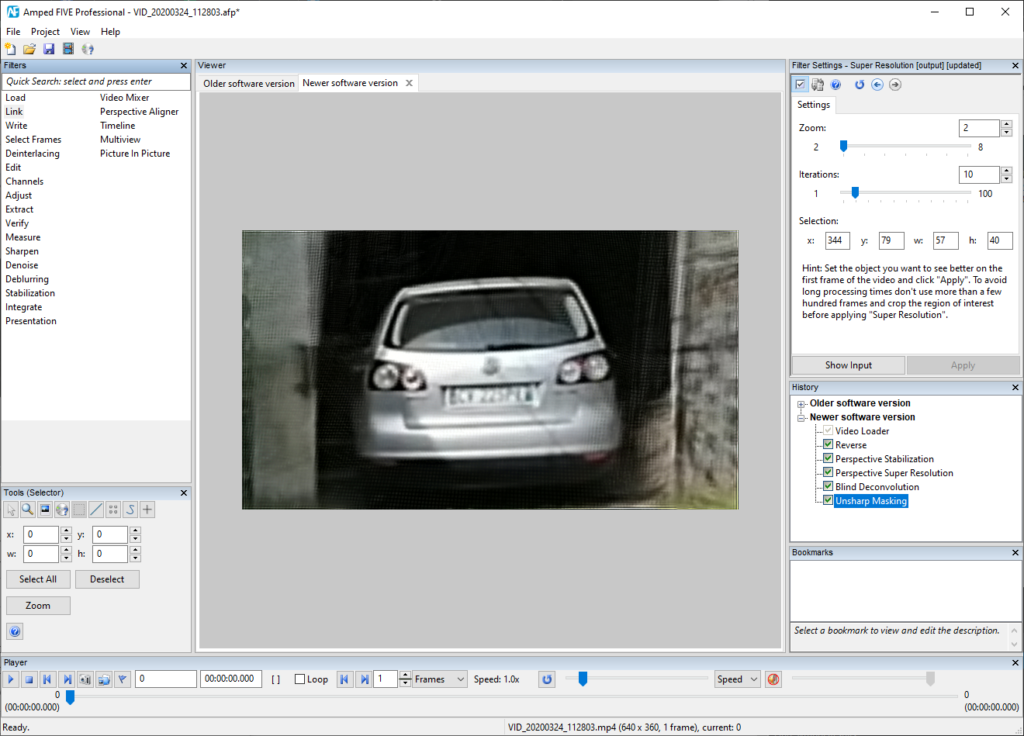

After a work that lasted several months, which involved prof. Sergio Carrato’s research group at the University of Trieste, we came up with a method for perspective stabilization and super-resolution. That has been published in Forensic Science International: Digital Investigations. It is now implemented in Amped FIVE’s Perspective Registration and Perspective Super Resolution filters.

Users simply love these new filters (as they witnessed during this year’s Amped User Days), since they are saving them much time and making accessible results that you could not reach before.

Test video with a low-resolution license plate recorded by a runner. Notice the strong variation in perspective.

The license plate was obtained from the linked video above using the Perspective Stabilization and Super Resolution filters followed by slight deblurring.

Detecting Video Re-Compression for Integrity Analysis

Integrity verification is a fundamental part of a thorough forensic analysis. Before even starting looking into your evidence, you should ask yourself whether you’ve been given the original evidence. If you’ve been given an export, perhaps a video that has been transcoded, that is a problem. You are not dealing with the original pixels!

A video whose integrity is preserved should have only undergone one compression, at acquisition time. Therefore, one possible way of checking the integrity of a video is understanding whether it has been compressed more than once.

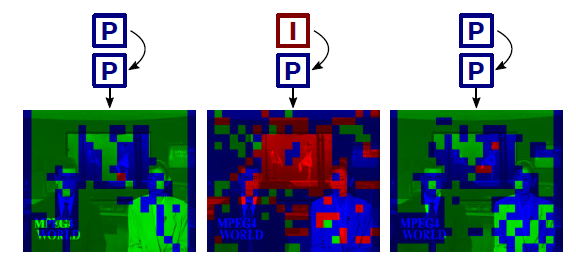

Amped has partnered with researchers from the University of Florence and from the University of Vigo (Spain) to look for a reliable, flexible, and robust analytical method for video re-encoding detection. You provide a video, and the algorithm tells you whether it detects traces of transcoding. There’s no need for external datasets and no need for reference material. The idea builds upon the Variation of Prediction Footprint (VPF). It basically says: whenever a video is re-encoded, and one of the original I-frames is recompressed as a P- or B- frame in the final video, an abrupt variation is observed in the prediction mode used for macroblocks (MBs). Specifically, we have more intra-coded MBs, and less skipped MBs. This is graphically shown below (and you can check it yourself with Amped FIVE’s Macroblocks filter).

Paper on Video Integrity Verification and GOP Size Estimation

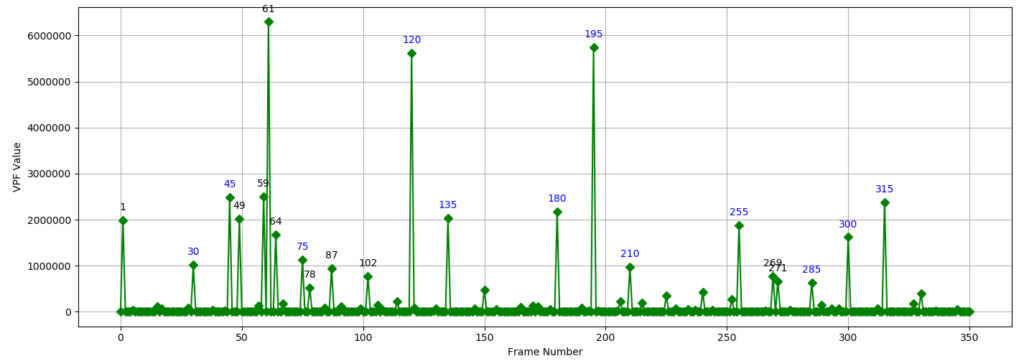

This and more advanced analyses have been presented in the paper Video Integrity Verification and GOP Size Estimation via Generalized Variation of Prediction. It was published in the prestigious IEEE Transactions on Information Forensics and Security. The paper shows that not only we can detect double encoding, but we can also estimate the GOP size of the previous compression. Indeed, assuming that the original recorder used a static GOP size, we expect to observe the VPF effect in a periodic way when analyzing the recompressed video. For example, this is the “VPF signal” computed on a video that was “exported as MP4” from a DVR. Can you see those spikes marked in blue? They’re all multiples of 15. That is a strong indication that this video has been transcoded, and the GOP size of the original video was 15.

Stay tuned, because this feature is soon becoming part of Amped Authenticate!

The Theoretical Justification for Ordering Restoration Filters

This research topic is a rather curious one. Those who attended our training know how much stress we put on the “correct order of filters.” The footage you’re working with is the product of a long acquisition and processing chain, which usually introduced several artifacts. The most reasonable way to go is to compensate for these artifacts in reverse order. This sounds pretty intuitive even in everyday life. When you’re dressing you normally wear clothes in a certain order. When you undress (so you’re undoing the chain) you take them off in the reverse order.

And if you get the order wrong, it may make a huge difference in the results. Now, besides the intuitive explanation above, there’s also a mathematical justification for this approach. However, the mathematical justification works when you have the exact inverse of all functions (in our case, defects). This is of course false in the image restoration case: we only have an approximate inverse operator. That means, if you have a blurred image, you can effectively deblur it with Amped FIVE. However, it will never be as perfect as if no blur existed at all.

Therefore, we are trying to extend the mathematical justification to a more realistic scenario in image processing. It is a very complex topic. We’re partnering with professors from both mathematics and engineering faculties to put pieces together. If we get there, it will be a major achievement!

More from the Past, Even More in the Future!

Those above are just some of the topics we’ve been working on. In 2019, we have presented a paper about denoising and reading severely degraded license plates. It was developed by our brilliant Gianmaria Rossi together with prof. Simone Milani from the University of Padua. And a few months earlier, we had investigated the challenging Hybrid PRNU-based source identification. That is, the case where only images are available for reference creation but the evidence is a video.

And what about now? Currently, we’re pursuing new topics in the fields of Deepfake image detection, Assisted camera calibration, AI-based fusion of multiple forgery localization maps. We’re also investigating, in collaboration with the University of Trieste, how AI-based face super-resolution affects the ability of an observer to recognize an individual.

As you may have noticed throughout this article, we collaborate with several different universities, Italian and not. So if you’re a student, an academic, or even a police officer willing to partner with us for some new research, just contact us and let us know!