The rapid advancement of artificial intelligence (AI) is nowadays transforming various aspects of human life. Recent breakthroughs in large language models have empowered them to produce essays indistinguishable from human writing. Similarly, AI has gained the ability to create images from simple textual prompts, generating seemingly authentic yet entirely fabricated images. This remarkable capability of AI to synthesize realistic images raises significant concerns regarding the credibility of visual evidence as proof of facts.

The underlying technology behind the so-called “diffusion-based” image synthesis, illustrated by projects like Midjourney, Dall-E, and Stable Diffusion, relies on complex mechanisms involving deep neural networks.

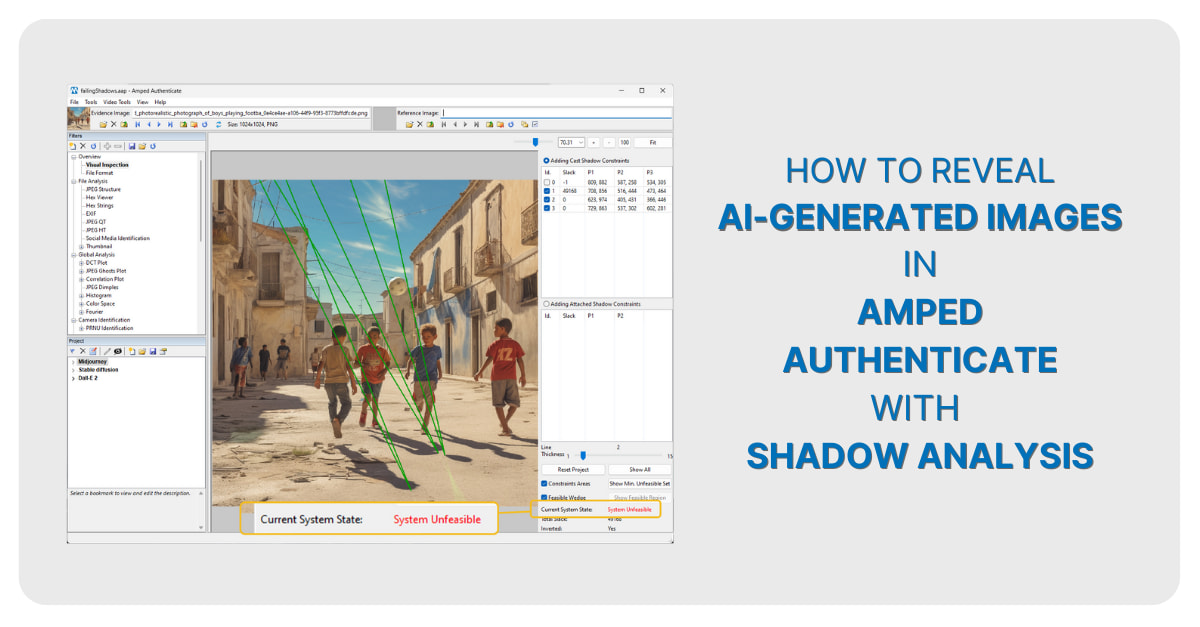

One approach to detecting AI-generated images is to use geometrical analysis to assess consistency within the image. This article shows how to reveal AI-generated images by analyzing shadows and reflections. Shadows are analyzed using Amped Authenticate‘s Shadows filter, which leverages scientific research on the properties of images illuminated by a single pointwise light source (such as the Sun or a light bulb). By defining constraints and analyzing multiple shadows, it’s possible to determine if they are consistent and align with a genuine light source. This method can unveil inconsistencies within AI-generated images, as the shadows may not adhere to the expected behavior of real-world shadows.

Reflection analysis is another resource to expose AI-generated images. The technique involves checking whether reflections are consistent in a real-world scenario: when objects are reflected on a flat surface, the connecting lines from an object to its reflection should converge at a single vanishing point. AI-generated images often struggle to maintain this consistency, making it a valuable tool for detection. Amped Authenticate provides an Annotation tool that allows analysts to draw lines connecting landmark points to their reflection points. In authentic images, these lines roughly converge at a single point; strong deviations suggest the image should not be trusted.

In this article, we’ll show some experiments involving AI-generated images from systems, such as Dall-E2, Midjourney, and Stable Diffusion. Through shadow and reflection analysis, inconsistencies were identified in the synthesized images. While acknowledging that not all AI-generated images exhibit such inconsistencies, these methods provide a reliable means of identification. Additionally, the article emphasizes that these techniques have the advantage of being explainable, repeatable, and reproducible. In conclusion, as AI-generated images become more and more realistic, the necessity for robust detection methods grows. This article highlights the importance of geometrical analysis—specifically, shadow and reflection analysis—as a potential powerful tool for uncovering AI-generated images. By examining the physical and geometrical consistencies of shadows and reflections, these techniques provide explainable results, aiding in identifying the synthetic nature of images that could otherwise deceive the human eye.

To read the full article published on Forensic Focus check here: https://www.forensicfocus.com/articles/how-to-reveal-ai-generated-images-by-checking-shadows-and-reflections-in-amped-authenticate/