Our initial post on the subject of Aspect Ratio correction detailed the complexities surrounding how an image should be displayed, and the importance of understanding how our digital data has been created. This post will go into the information reported by Amped FIVE and how to use that information to make any necessary adjustments.

Let’s have a recap!

- SAR Storage Aspect Ratio: Width by height ratio of the encoded digital video (calculated simply by dividing the width by the height of the actual stored frame).

- SAR Sample Aspect Ratio: Width by height ratio of the pixels with respect to the original source. (Information, if available, that is stored within Mpeg4 streams).

- DAR Display Aspect Ratio: Width by height ratio of the data as it is supposed to be displayed (information, if available, that is stored within the file or calculated using other values).

- PAR: Pixel Aspect Ratio Width by height ratio of the pixels with respect to the original source (like Sample Aspect ratio, but calculated dividing DAR by Storage Aspect ratio).

It’s unfortunate that we have to define two different definitions for the same “SAR” acronym. Different standards and pieces of software use the same acronym, often without explicitly saying if it’s Storage Aspect Ratio or Sample Aspect Ratio.

How are these values displayed and calculated in Amped FIVE?

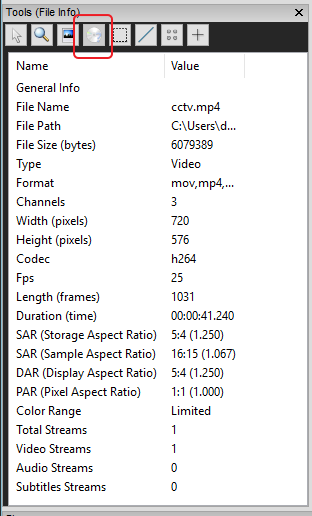

First up, we have the Quick Info Tool:

This reports that our stored pixel width and height is 720 x 576.

The aspect ratio of these measurements is 5:4.

This value is stated under SAR (Storage Aspect Ratio). Notice how the decimal calculation is also present. This value is obtained by dividing the width by the height.

The next value is SAR (Sample Aspect Ratio). This value is read from data within the stream. It states that each pixel has a Sample size of 16:15. We must remember this as we will require this information shortly.

The DAR (Display Aspect Ratio) is presented next. This File Info dialogue purely reads the information from the file and does not perform any automatic calculations. As a result, the Display Aspect Ratio is calculated in the same way as the Storage Aspect Ratio. It is important to remember though that if the container has instructions to change the Display Aspect Ratio then this will be read here.

Lastly, the PAR (Pixel Aspect Ratio). This again is a calculated value, by using the Storage Aspect ratio and the Display Aspect Ratio.

The first issue identified in this information is the Sample Aspect Ratio of 16:15. For those people that have forgotten the math from the initial post, this informs the decoder of mpeg4 files that the:

- Width should be multiplied by 16 (720 x 16 = 11520)

- Height should multiplied by 15 (576 x 15 = 8640)

- The results should be divided (11520 / 8640)

- Final result will be the Intended Display Aspect Ratio (1.3333)

So, the correct Display Aspect Ratio to present the footage as intended is 1.333 or 4:3.

Why doesn’t FIVE do the calculations for me?

This Quick File Info tool just reads the information. It is up to you, and me, to identify the important information and then use that to assist in the analysis.

As a result of seeing this information, the next thing I must then do is to analyse the data further.

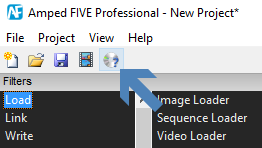

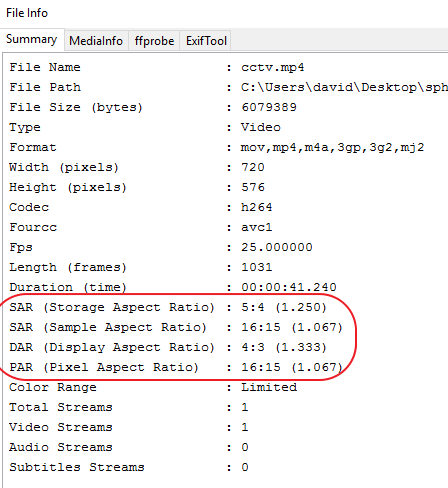

It’s time to take a look at the main File Info Tool. Remember; it’s at the top…

It is here that we find something different, and it can confirm our calculations. You could, of course, do it the other way around, and check here first… and then do the math to validate what the tool is telling you.

The first page is a summary of the information. I can see straight away that the DAR is being calculated from the Sample aspect ratio and presented correctly as 4:3.

You can also view the associated MediaInfo output, etc., from FFprobe.

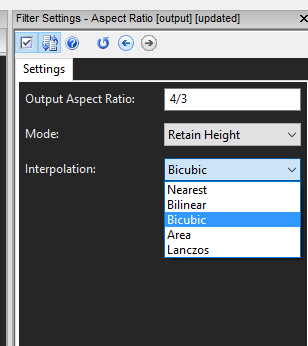

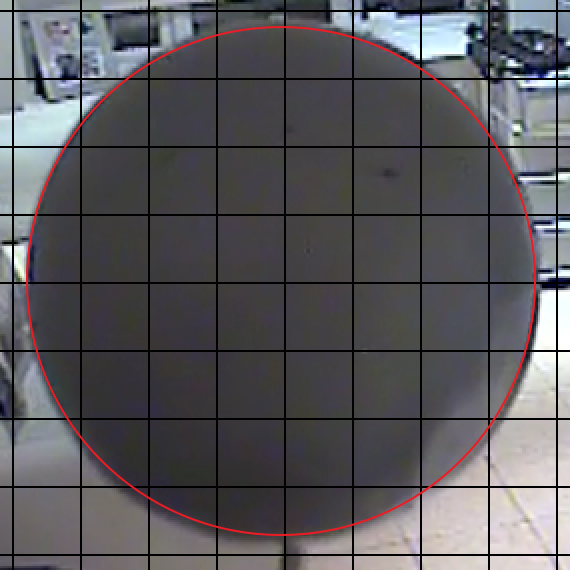

Let us now take a look at the visual information, and use the data learned to correct our video for presentation. To assist in this example, I have placed a sphere into the camera view.

I can see a number of issues.

- There are black borders along the vertical edges; they are not equal and one is slightly wider than the other.

- The footage is interlaced.

- The footage looks ‘squished’.

- There is some distortion caused by the cameras’ optical settings.

Following the Image Generation Model, I can correct these in order..

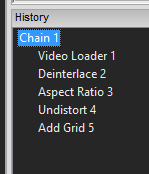

In the Aspect Ratio Filter, I have simply chosen 4:3 with Bicubic Interpolation.

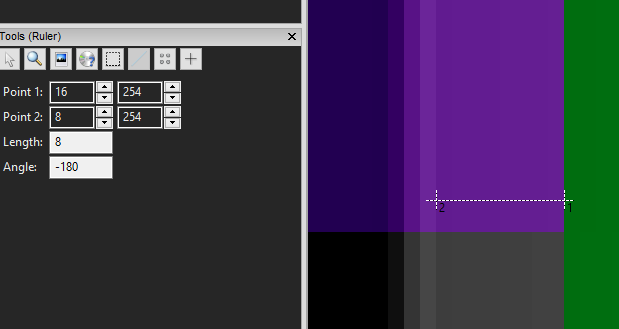

When I now add a grid (Presentation > Add Grid) I can assess the shape of the sphere. I could also use the Ruler tool to measure the width and height.

I can now see that my sphere, is still not exactly a sphere, it is still slightly vertically ‘squished’; where is the problem?

You may recall from the first introduction post to Aspect Ratio; I explained that as analysts, we have no knowledge about what exactly is being sampled or how the pixels are being created. Could the black borders be a clue? We also can’t assume that the export has been created correctly.

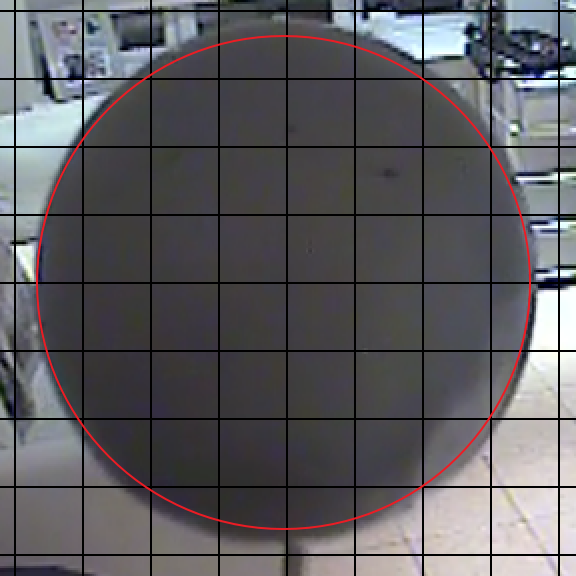

By measuring the black border’s, I have established that there are 4 pixels on the left and 10 pixels on the right.

I now have to consider what form of compression has been used. I know from the File Info that it’s H264. As a result of this, I need to assess whether some of the colour being seen in the image down the edges is as a result of Interpolation.

The easiest way to achieve this is by utilizing the new macroblock filter to visualize the actual blocks.

I can now see that the first horizontal block has interpolation between the black, along the edge, and the full image. It also has 4 pixels of colour that have been added through this process. Should the image be cropped centrally, resulting in a horizontal resolution of 704 pixels? This would remove 8 pixels from either side.

After adding in this crop, that resulted in a new size of 704 x 576; I can complete the filter chain again. The resulting image has a pixel size of 768 x 576, the same as the first attempt, but using the grid, I can now see that my sphere is much nearer to what it should be.

From this, I have established that the aspect ratio relates to the visible area and not the padding that was added to the edges.

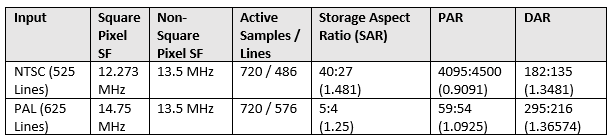

Well then, how would we check this? This time, rather than using the visible area, we need to use all the pixels. So, instead of using a DAR of 1.333 (4:3), we use the DAR calculated from the Sampling Frequency. Here’s the table again from the original post:

From this, I can see that I should enter a DAR of 295:216, or 1.36574.

The result is an image with the dimensions of 786 x 576. Why? Well, this time, we need to now crop after the aspect ratio filter to 768 x 576 centrally.

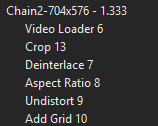

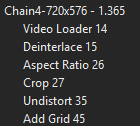

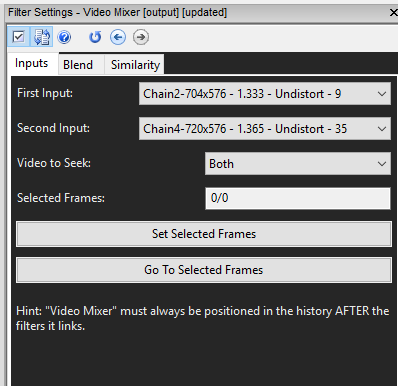

Let’s look at the two filter chains: (The sizing in the Filter Chain names are to help me, and are not the size of the end result!)

Here’s the first one:

After loading the video I have cropped straight away, and then completed the other filters. Here, my Aspect Ratio was set to 1.333.

In the next test, I loaded the video and used the Aspect ratio of 1.365

As a result, I have had to crop at the end.

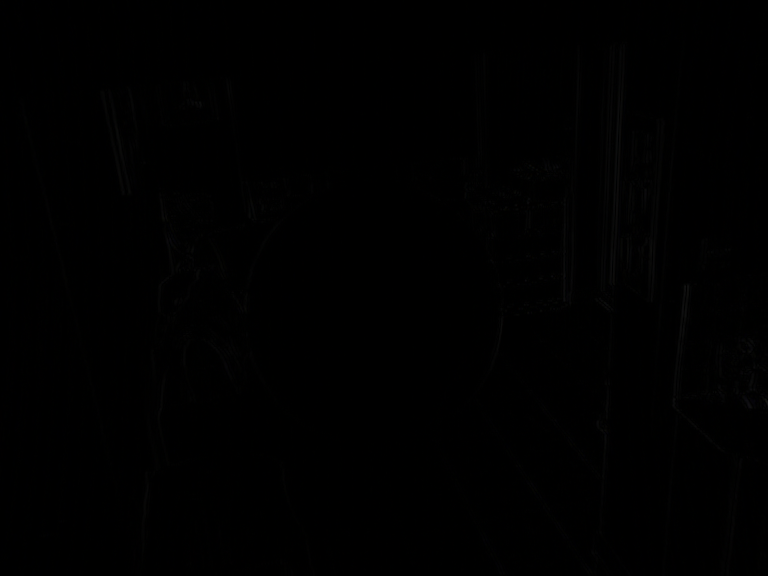

Both images are now 768 x 576 and both spheres visually look the same. I am now able to assess the differences using the Mixer Filter.

The differences are then seen in white.

Can you see the differences? There is not a lot! Just looking at the images, they are beyond the recognition of our visual system.

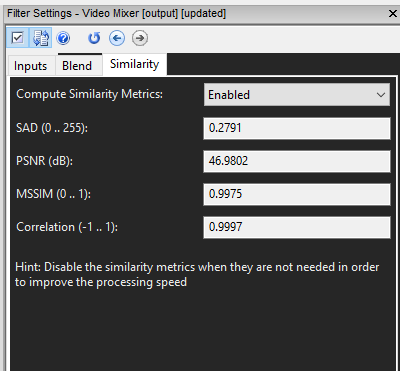

Mathematically, I can see the value of the difference in the Similarity tab.

The Sum of the Absolute Difference (SAD) being 0.2791.

Before I move on, it is worth reiterating that there has to be a reason for correcting the Aspect Ratio in the first place. Perhaps you wish to identify a vehicle, and if you did not correct the shape, you could receive suggestions that are incorrect. You don’t want a limo looking like a mini (slight exaggeration, but you know what I mean!)

So, both methods above have resulted in very similar images.

Lastly then… which one has the least amount of difference to the original image? There will be a lot in both as we have rescaled them both, but in different ways… and it is here that more study and testing is required.

In my previous testing on re-sizing for Aspect Ratio, I examined the differences between using the Horizontal and Vertical axis. Should we reduce the width for all NTSC footage? Are there circumstances where increasing the height is acceptable as it does not discard data that may be of importance if we reduced? Which Interpolation method is best?

In Amped FIVE, we give you the tools and the filters to conduct these tests and present them within a scientific workflow. Why did you choose a specific route? With FIVE, you can not only explain, but physically present the evidence to show why.

The Aspect Ratio filter is extremely flexible. Allowing manual entry of fractions and decimals, selecting which axis to retain and then choosing your Interpolation method.

The selections will come down to the video file being examined and the purpose of examination.

Final Note!

The community discussions, studies and examinations of issues such as Aspect Ratio correction are an important element of our science.

We study the digital data to interpret and present an image, or a sequence of images, through research and analysis. As practitioners of the science we learn as a group and, as such, ensure that the relevance and integrity of FVA are maintained within the legal environment.

There are so many different and unknown methods used by manufacturers of Video Surveillance Systems that attempting to state that everyone should choose a specific method would be very difficult until further studies are completed.

How about the systems that originally capture at 704 x 240 but export out of the DVR at 720×480! The DVR interpolates both ways – what should be chosen then?

As is always the case with FVA, and it’s one of the reasons why the forensic analysis of multimedia can be challenging, is that there is no initial data set. The variables are huge and the unknowns many.

It all comes down to the case by case analyses, testing, and remembering, above all else, that if it’s not required – don’t do it!