One of the most common tasks in Forensic Video Analysis is the request to perform image comparison. How to achieve this is also one of the most popular questions I get whilst travelling to Police Agencies around the world and delivering Amped FIVE training.

I always have to respond with another question then – “how do you want to present that comparative evidence?” There is often a broad range of answers, due to the differences required by their individual legal systems and their own personal reporting style.

It is for these reasons why there is no definitive answer to how to perform, report or present comparative analysis within FIVE. It gives you the freedom to chose how you are going to conduct the analysis and then the flexibility on how to report and present it.

Before we look at an example, I think it’s important to discuss Comparative Image Analysis…

There are many guidance documents on image comparison, published by a number of international bodies.

SWGDE, in the US, have released the following draft for public comment. I expect this will be formalized early 2016.

- Best Practices for Photographic Comparison: https://www.swgde.org/documents/published-complete-listing

Then, in the UK we have the following from the Forensic Science Regulator.

- Image Comparison and Interpretation Guidance: https://www.gov.uk/government/uploads/system/uploads/attachment_data/file/405528/Image_Comparison_and_Interpretation_Guidance_Issue_1_160115.pdf

- Cognitive Bias: https://www.gov.uk/government/uploads/system/uploads/attachment_data/file/470549/FSR-G-217_Cognitive_bias_appendix.pdf

- Forensic Video Analysis: https://www.gov.uk/government/uploads/system/uploads/attachment_data/file/351223/2014.08.28_FSR-C-119_Video_analysis.pdf

There are a few others… but you should get the idea from all of these that comparative analysis is not something that is taken lightly. The purpose of the guidance is to ensure that the many challenges involved can be mitigated through a professional and scientific workflow.

That workflow is commonly referenced as ACE-V(R)

- Analyze

- Compare

- Evaluate

- Verify

- Report

There are many obstacles within each stage, so to make things easier.. let’s look at this little imaginary project to see how FIVE’s flexibility can make the process much less of a burden.

Analyze

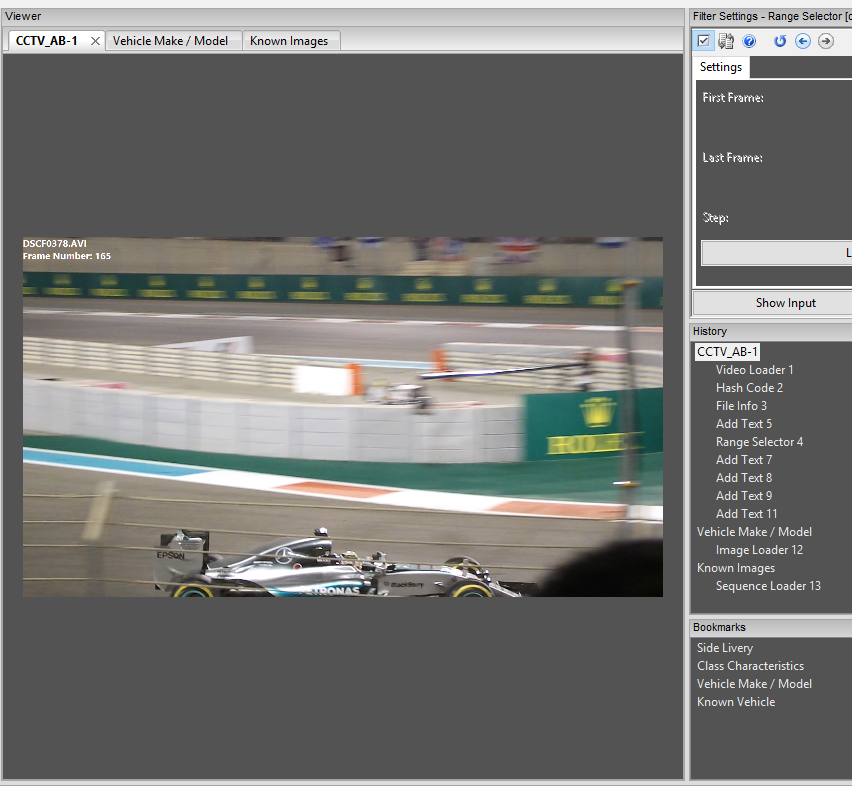

We have this video of a vehicle. I have been requested to analyze the video and perform an initial assessment to identify comparative possibilities. In order to reduce cognitive bias within any comparative process, it is important that a staged approach is taken in both the information and data being received.

A lot of the guidance surrounding comparative work details the importance of the initial analysis and processing of the data. This is particularly relevant to surveillance video where we are often dealing with highly compressed, limited resolution, poorly designed CCTV. As a result, the slightest mistake in acquisition or processing could contaminate our analysis and render all other work inadmissible. Are you observing an object or an artifact of compression?

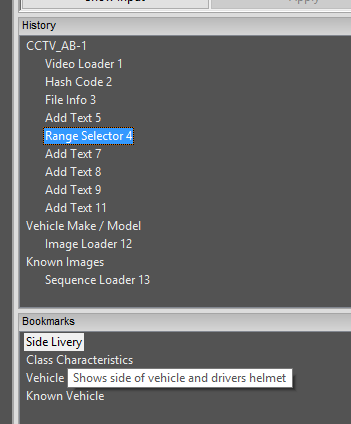

So, in our little example…. I have loaded in the video, created a hash value and run some meta-data analysis. This meta-data is very important, as it details important information that affect my analysis, such as compression type.

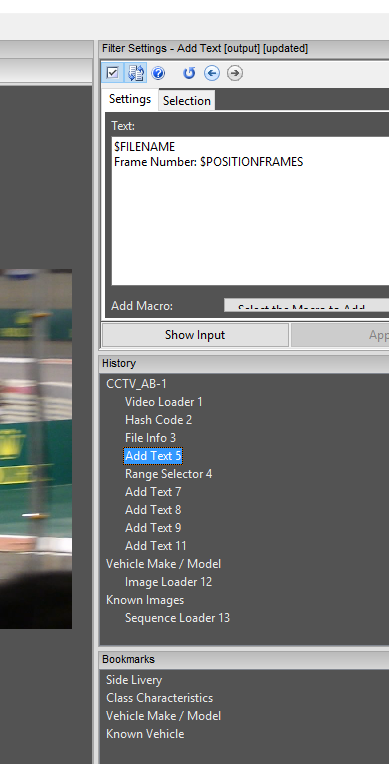

From here I have added in a text overlay of the Filename and Frame Number. Remember, these are automated – just select the Macro needed.

It’s important to do this BEFORE selecting a range of frames. This ensures the Frame number relates to the original file and not your trimmed clip!

I have then used the Range Selector to chose my in and out points, and trimmed the video to the area of interest.

Now, in this little example, I have used a standard H264 file, but what if you have extracted some raw video data, and then used Convert DVR to place it in a container? What would happen if you had done this, and also obtained a screen capture of the footage?….. The only difference would be more chains! You may then use the Video Mix filter to identify what differences there are in various export methods. From here you can identify what is the best video to use for the comparative analysis.

After selecting my range of frames, I can visually analyze the video. There are a number of different ways to achieve this next bit, and it all depends on how many images you have.

As seen in my Bookmarks window above, I have simply bookmarked my frame of interest, renamed it ‘Side Livery’ and then placed a description into that bookmark.

If I had a number of frames that I wished to use, I could bookmark a number of them and then export them as separate images. When I do this, I rename my images as the frame number. I can then load them back in as individual chains.

Back to the example, I have then added in a number of text filters…

To save changing my text format each time, I simply copied and pasted the first one, then just moved them and changed the number.

You can also see that I have added in a new bookmark and included all the details as a description. So, I have identified 4 class characteristics. Remember that this bookmark name and description will be added to my automatic report – no duplication needed!

After initial analysis, understanding the video format, identifying the evidential video to use and then documenting class, and perhaps unique characteristics, I can now automatically create my report. At this point, it’s time to receive the images of the known item or receive the item directly. This is the staged approach that can avoid a number of detrimental bias issues.

Compare

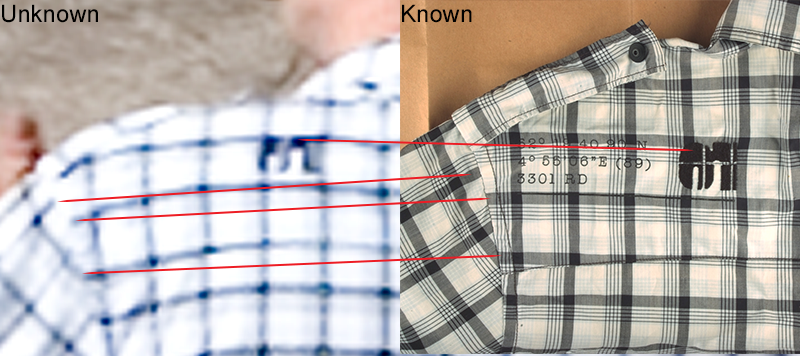

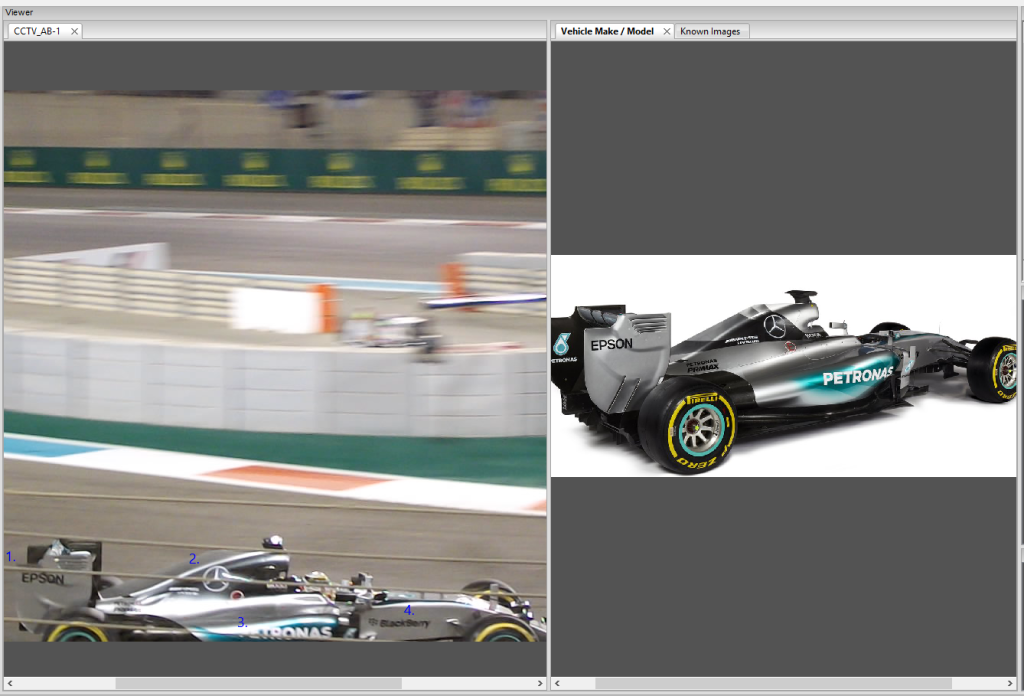

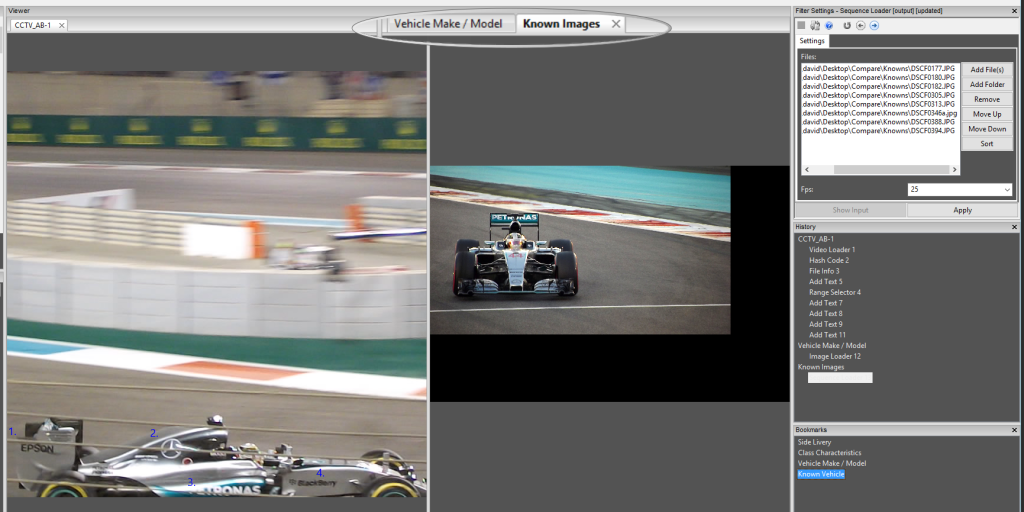

One of the many features that people seem to forget is the ability to have multiple window chains open. In the example above, I have my Video exhibit chain open in the left window and then two chains open in the right window.

My first right chain, is a single image.

The second chain in the right window is a series of images loaded in as a sequence…

These Images could be a series taken by a Crime Scene Photographer. This is an easy way of moving through images to find the best one to suit your need.

From here, its really down to the individual case and the requirements of what you need to do.

So far, we have seen how flexible FIVE is when analyzing and documenting the comparative process, and then comparing images and video to other images or video. I actually find that more comparisons are of unknown to unknown, where it’s comparing an object in one piece of terrible video to another one – usually just as poor!

It all comes down to chain and data / image management.

Evaluate

Going back to our ACE-V(R) , we have got to the Evaluate section. At this point I must question my comparative results , especially when dealing with Unique characteristics. I may also have to evaluate any restoration or enhancement process I have used to identify any Class or Unique characteristics. I may have to return to the scene and place known items back into the scene under similar conditions. I may have to identify the motion estimation in the Mpeg structure to ensure that a unique mark is not an artifact of compression or been left over from a previous macro-block.

Whatever is required, all the new images and video will be loaded into the project.

Presenting comparative evidence can be quite a challenge, but all you are doing is telling the story…

This is the Frame from the Video, these are the points of interest, these are some more…. This is the known item, these points match…. I have checked these details by placing the item back int the scene – the points are still present.

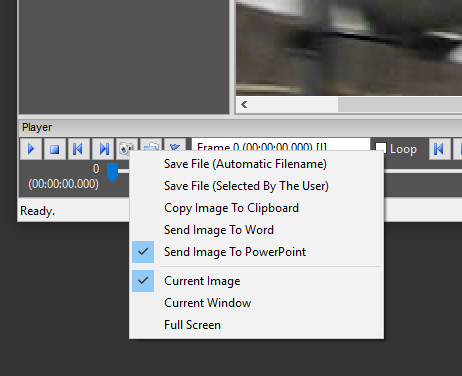

With FIVE’s ability to select specific images and then send them to a specific software package, you can go through the project and simply just click ‘snapshot’ when you want the image to tell that part of the story.. here I am sending my images to a PowerPoint document.

All my images have the frame number on already so a full comparative presentation can be built in minutes.

Verify

Verification of any comparative conclusions ensures that there is repeat-ability in the process. Not only would I normally conduct some cross program comparison, but it’s also vitally important for another analyst to review and verify the conclusions. This ensures that any quality control issues are identified that may affect the outcome.

Report

After Peer Review, its time for reporting. All of my work is contained within the single project, and with FIVE’s ability to export out to HTML, PDF or DOC, again – whatever method is required – it’s catered for.

I hope that this has given you some ideas of how to use FIVE when conducting comparative analysis. We have a number of product updates, designed specifically to assist you further in this task, that will be rolled out over the next few months.

As always, if you have a specific feature request – just drop us an email. If you want it to do something, you can bet others will want it to!