April Fools’ Day has just passed and we hope you didn’t go through any nasty trick! Alas, for people working with digital evidence, the risk of getting fooled by some ambiguous finding is always around the corner. In the case of digital image forensics, among the most frequent pitfalls, we find false positives produced by forgery localization algorithms (that is, when an algorithm marks as manipulated a region that was not so). Today’s Tuesday Tip deals with them and shows how Amped Authenticate helps you rule out some of them.

Let us begin this Tip with a sample case to work on: we are given this image below (a JPEG adaptation of one of the images of the IEEE IFS-TC Forensics Challenge image corpus) and we are asked to investigate the authenticity of its content.

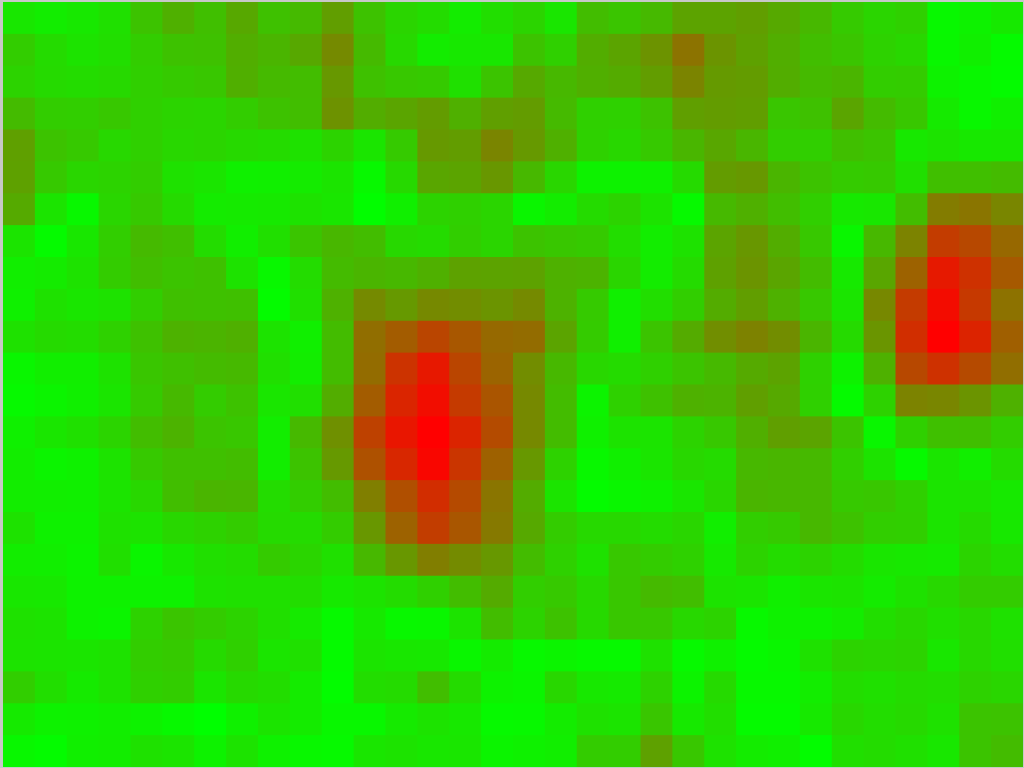

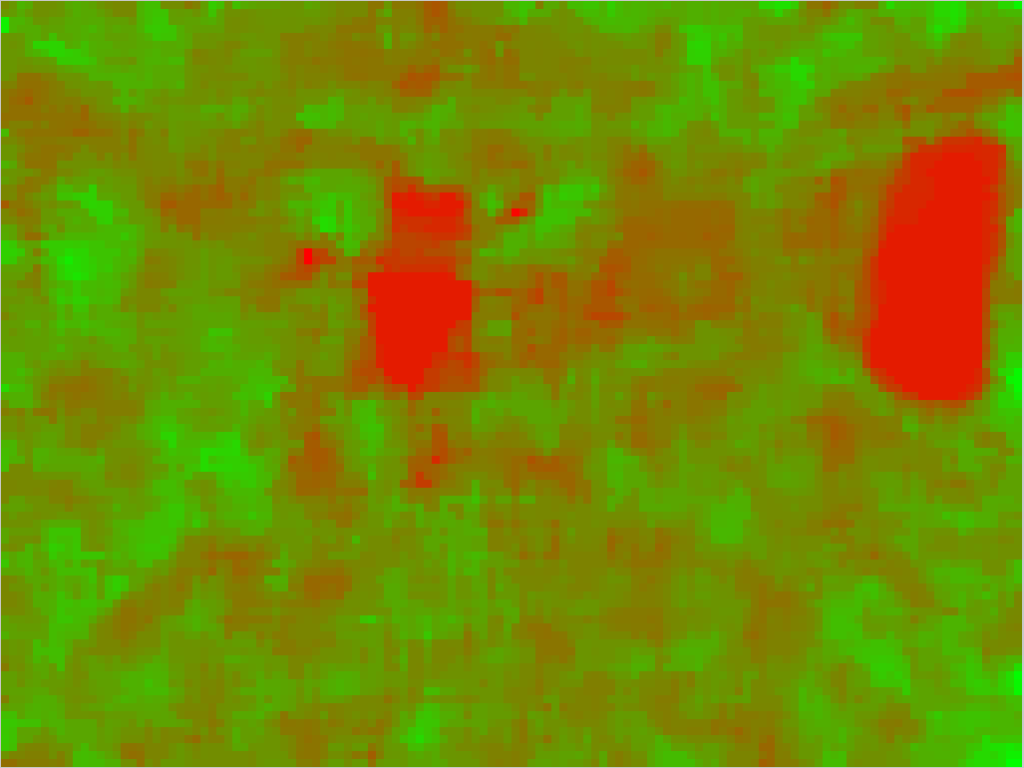

For the sake of conciseness, we skip the usual File Analysis and Global Analysis, and go straight to the Local Analysis, looking for possibe manipulated regions. We run some of the algorithms featured in Amped Authenticate, and get these forgery localization maps:

Ok, the pattern is pretty clear. We have two regions standing out in all maps: one on the right, corresponding to the empty notice board in the image, and one in the center, corresponding to the door at the end of the corridor. Should we conclude that both regions have been tampered with? Well… not so fast!

If we take a moment and look at the image content, we see that the two regions are entirely different. Pixels in the notice board have average brightness, they are quite uniform, similar in some sense to those of the wooden door just next to it. Pixels at the end of the corridor, instead, are saturated to white. While this kind of difference is rather uninfluential to a human observer, it makes a great difference for a forgery localization algorithm. Almost all forgery localization algorithms, indeed, are much less reliable on extremely bright regions (saturated to white), extremely dark regions (saturated to black) or regions with extremely textured or flat c

Why is this so? It’s hard to tell in general without making harsh simplifications, still, we can say that most forgery localization algorithms are based on distinguishing manipulated parts of the image from innocent ones, and they do that on some statistical basis. If the image content is itself “anomalous” compared to the rest, it will be harder for the algorithm to understand whether this difference is due to manipulation or to intrinsic properties of the depicted scene. In the extreme case of all-white pixels, it becomes nearly impossible to tell whether those pixels were white straight from the beginning or they have been added later: in both cases, they’re just a matrix of all equal numbers (the exact same reasoning applies to black pixels)!

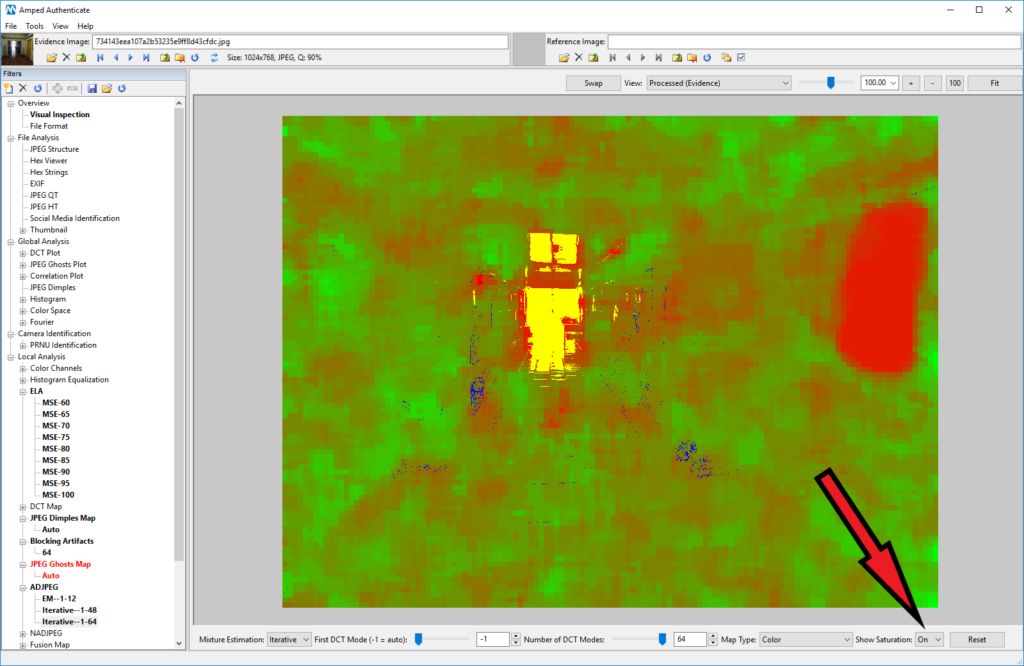

For this reason, many filters in the Local Analysis category of Amped Authenticate feature a post-processing option called Show S

This is intended to be a simple yet helpful aid for the analyst: they should not trust the output in yellow and blue regions. In our example, we see that the door is correctly marked in yellow, so we are only left with the notice board to reason about. Is the notice board red because of its “natural content”, or is it because of manipulation? The door to answering this question is… the door! The wooden door next to the notice board, indeed, is very similar in terms of pixels, but it is entirely different in the forgery localization maps: in the maps, the door is just mixed with all the rest, while the notice board stands out clearly. Based on what we have, we conclude that the notice board has likely been tampered with (which is indeed the case – we have the ground truth for images in this dataset!)

This week’s takeaway is: always think twice! And while we’ll strive to give you more and more useful tips from week to week, remember the best way to gain real insights on these topics is attending an Amped Authenticate training! In our courses, we invest a lot of time on samples where students can train in telling apart false positives and false negatives from trustworthy results.