If you’ve attended one of my classes or lectures, you’ve likely heard me say the following phrase many times, “There’s what you know, and there’s what you can prove.” The essence of this statement forms the basis of the Criminal Justice system as well as science.

What I “know” is subject to bias. What I “know” is found in the realm of truth. As a Kansas City Chiefs supporter, I “know” that the Oakland Raiders are a horrible team. I “know” that their fans are the worst in the world. After all, the Chiefs are the best and their fans are as pure as the wind-driven snow. This is “true” to me. Whilst funny and used to illustrate a point (I’m sure there are some really great people among the Raiders fan base), truths are things we “know.” Truths are rooted deep in feelings/emotions and unlikely to be changed by facts. There is a segment of the US population that believes it true that Elvis is still alive and that he’s likely hanging out on some Caribbean island with Tupac and Biggy Smalls.

Facts are measurable; they form the basis of tests of reliability. I can measure the temperature in a specific location and you, standing in the same location, can perform the same test and come to the same measurement. Supported by facts, our tests in this discipline become reliable, repeatable, and reproducible. Our conclusions can thus be trusted.

What on earth does this all have to do with Amped FIVE and Forensic Multimedia Analysis? I’m glad you asked.

By now, you’re well familiar with the fact that Amped Software operationalizes tools out of image science, math, statistics, etc. We also operationalize tools and training out of the world of psychology. By this I mean if we’re going to work in the visual world, we must know how that visual world operates not only from a mechanical standpoint but also from how the brain processes the inputs from its collection devices.

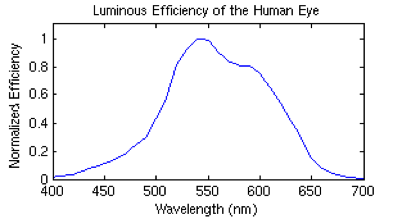

About those collection devices – your eyes. No matter if you believe in a heavenly creator or ancient alien astronauts, our eyes all feature the same mechanical properties. Yes, we have rods/cones, we learned this in primary school.

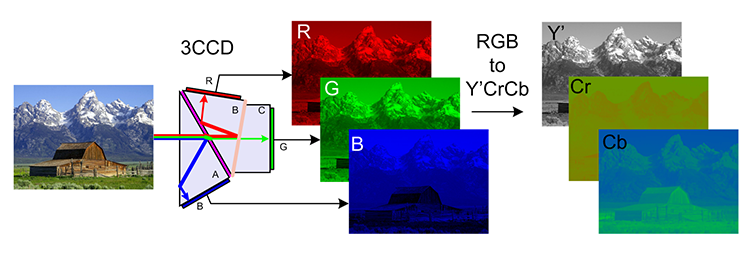

Our eyes are very sensitive to slight brightness changes and not very sensitive to color changes. Additionally, our visual system is most sensitive to green (with almost two-thirds of the cones), then red, and lastly blue. If you consider the standard conversion formula from RGB to YCrCb you can see that the contribution is as follows:

Y = 0.299R + 0.587G + 0.114B

Why is this important to know? Engineers know this. Engineers know this and use it in the design of systems that capture visual data. They know that we’re not good at processing colors (especially red and blue) and they choose to acquire and encode optimizing for our perception.

In fact, in most imaging sensors, half of the pixels are set to acquire green light and only one quarter each for red and blue. Furthermore, during compression often blue and red chromas are downsampled (with respect to the luma, which contains mainly the green contribution) at a lower resolution and compressed (quantized) at a stronger level. It’s not a matter of high-end/low-end, I’ve seen it across the spectrum of devices.

Here’s the problem illustrated. The picture above is from Hal Bergman, a popular photographer. Some of the questions investigators will want to ask deal with color. For example, what color clothing is being worn? They’ll also focus on skin color/tone so as to make judgments on race in attempting to build a description of the people depicted.

But can this picture be trusted for the purpose of describing color? What is true? What is fact? How would you know if you weren’t there at the time of the capture to measure the values in the scene? Can you measure “then” in science? How does “then” compare to “now” in terms of measurement of light/color? One can only measure “now.” “Then”, in either direction of time, must be predicted. This is why Meteorologists forecast (predict) weather. They can’t measure what will happen next week, now.

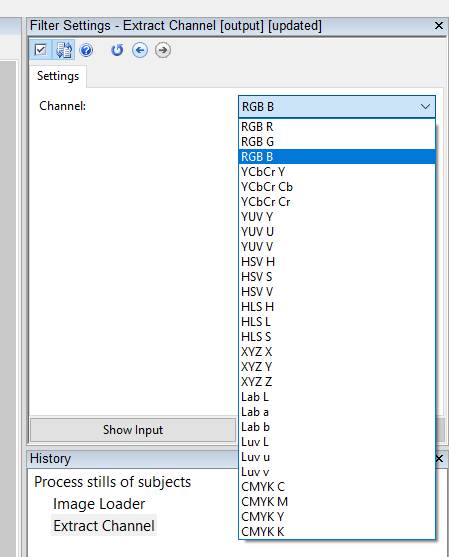

First of all, image capture devices are colorblind. Image processing systems are similarly colorblind. They don’t “see” or process color as such. They work in the world of color modes and color channels. Most of the images and videos we see and process in our software use the RGB mode, but there are many more modes out there. For example, the ads in our mailbox are printed using a four-color process (CMYK). In the CCTV world, the color channels are greyscale and the programs do a bit of magic in combining the channels into a color representation.

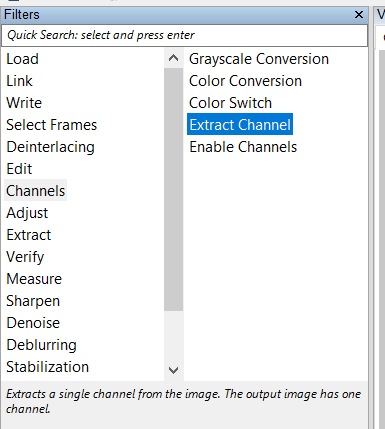

If you extract a channel, a single RGB channel, you can see the different intensities. Using “Extract Channel” in Amped FIVE, we can see the RGB – Blue channel by itself. Let’s do that and look at the Blue Channel of the above image.

If you extract a channel, a single RGB channel, you can see the different intensities. Using “Extract Channel” in Amped FIVE, we can see the RGB – Blue channel by itself. Let’s do that and look at the Blue Channel of the above image.

The selection is returned and shown below.

As often happens, this scene is illuminated by artificial light, which causes a yellow illumination with a very low blue component. This yields a very coarsely quantized and noisy blue channel.

In fact, the RGB – B channel is full of noise and other garbage. The garbage completely obscures the subjects’ faces. It dominates the scene and will affect the processing of color/light in terms of correcting the image. It’s essentially corrupted the color representation within the image.

You might have noticed that some images/videos don’t respond well to automatic color corrections and your manual work on color/light was very frustrating. This is likely why, the RGB – B channel is full of garbage.

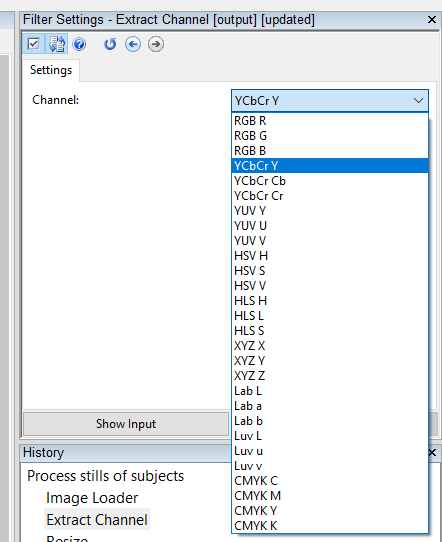

The above image was shot with a Canon EOS camera. It’s a rather high-end device. Most DVRs that we see at crime scenes are essentially a $10 box of whatever parts were available on the day the sales order was placed. High-end/low-end, this problem remains constant. If color is unreliable because of problems within the channels of the source, then it’s a better choice to focus on the light/contrast within the scene. We’ll take this to greyscale using only the luminance available in the image. In the psychology of visual perception, if we get color wrong, people will get their judgements wrong. This leads to both Type I and Type II errors – false hits or false misses.

FIVE gives the user the ability to work in a variety of color modes. The YCbCr mode features separate channels for luminance and chrominance with Y as the luma component and CB and CR containing the blue-difference and red-difference chroma components. The YCbCr space was designed with human perception in mind. We process light (rods) and color (cones) separately in our system. As such, color and light are separated in this mode – meaning that the problems of color channels can be mitigated by extracting/focusing on luminance.

By separating color and light, in choosing to extract the YCbCr – Y channel, we can process our files in a more “perceptually accurate” fashion.

The results of this channel extraction are much easier to work with from a variety of standpoints. Focussing on the luminance allows the viewer to focus on features, without discounting judgement due to problems with color.

It’s important to remember that 2%-10% of the human population does not process the perceptual world in the same way as the other 90%-98%. You may be a typically wired human but do not assume that the Trier of Fact is as well. This fact has formed the basis of my doctoral and post-doctoral work. If you follow me on LinkedIn, you’ll know that I’m one of the minority of humans that is not typically wired. This is why I spend a bit of time in my training classes teaching students to focus their work in the world of facts – the last mechanical device that will project/display our work and the combined perceptual abilities of the Trier of Fact (the people who will view our work in order to form judgements). Again, if we get color wrong, they may come to the wrong conclusion.

Taken as part of the file Content Triage step in the workflow, the inspection of channels is quite an important process. Amped FIVE gives you the ability to move through a variety of modes in order to find the many problems present as a result of engineering/product design choices – and solve them in a reliable, repeatable, and reproducible way.

If you’d like more information about Amped FIVE or our other amazing tools and training options, contact us today.