The human visual system is highly complex. Linked with our memories, our brains are then able to quickly interpret shapes, objects and people that other people without the added bias of memory would not. That’s how people recognize others in poor CCTV. It’s that unique knowledge of a person or object that helps in the recognition intelligence. Read on and learn more about how our mind deals with authenticity versus interpretation.

Our minds can also interpret information differently, not only through experiences but also our emotions.

What do you see below?

Most of you would have probably seen this image before, but can you remember back to when you saw it first? Did you see the old lady or the young one? Is it an ear or an eye? People can interpret the image differently.

How about this?

OK, it’s not a trick question! But you all said “a cat”, right? Correct!

The point of all this is that our minds are using half a black and white shape to tell us that it’s a cat.

From there we have 2 possible options… the first is that it is actually a cat. The second is that it is a single or series of shapes or objects positioned in a way that we interpret those objects as one, and we only perceive it to be a cat.

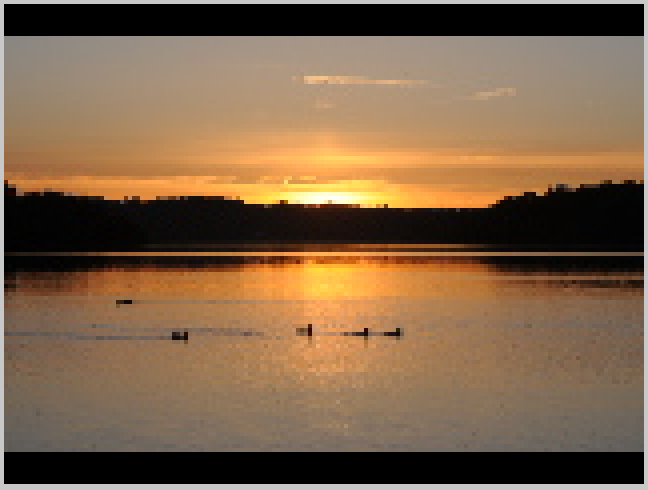

This was the predicament that photographer, David Burr, found himself in during the evening of 7th June 2014.

David had been at Bewl Water in Kent, UK, photographing the sunset across the lake. After returning home and examining his images, he had a choice to make – was he looking at a big cat, or was he interpreting another object to be a big cat moving across the lake behind the ducks?

To make things easier for you, lets ‘zoom’ in a little..

After David posted the images on Flickr, various people offered suggestions on what it could have been. David also spent some considerable time in attempting to clarify the object and some of those images can be seen in the Flickr album:

After all of this, the word ‘hoax’ began to come into the conversation. The reason being that big cats might be common in many parts of the world but Southern England is not one of them. So, not only were requests being made to enhance the image, but first, could the image be authenticated. Was it a true image to start with? If it wasn’t, then there would be no point in enhancing it.

As a result, David contacted Michael Hartsthorne at Best Evidence Technology, one of our UK partners, to seek some advice. It was from here that I was asked to take a look.

In order to put some clarity into this situation we need to look at the integrity of the image first…..

Straight away there are warning signs in the image posted. When taking professional digital photographs, you don’t have text printed over the image. Just from looking at that, I knew they had gone through some kind of imaging processing software. Luckily, David sent what he stated was the original image, along with others from that evening at the lake. From now on, we will work with those.

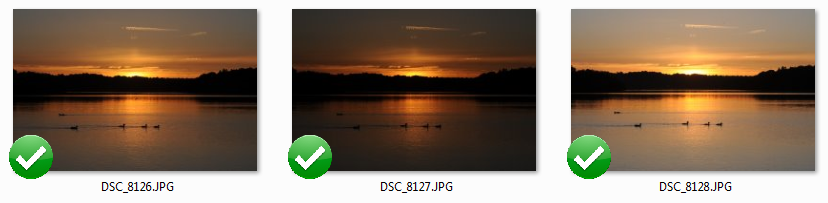

The three images were all visually different, and had a consecutive naming convention that I would expect coming from a Digital Still Camera (DSC).

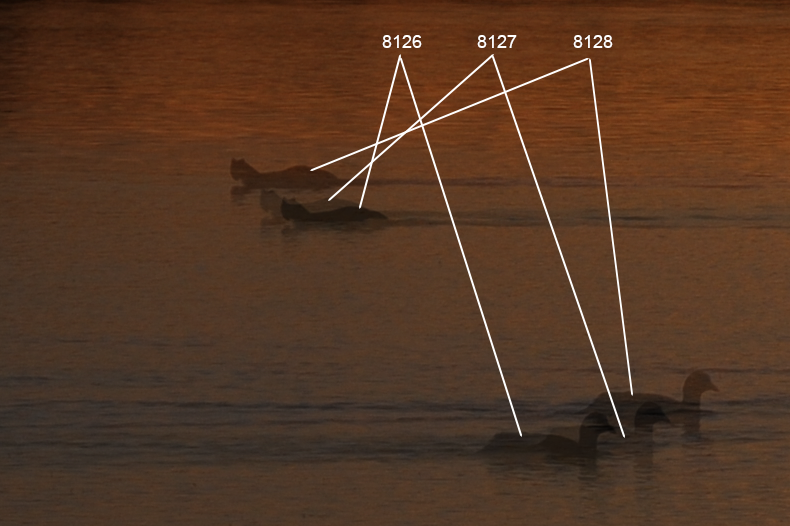

Before doing any individual analysis, it’s important to use these images together to see if the movement of objects in the image, match the numbering order. Are the ducks moving from left to right, and is our unknown moving from right to left?

There was some vertical movement in the camera but all objects moved correctly, in the direction of the numbering order.

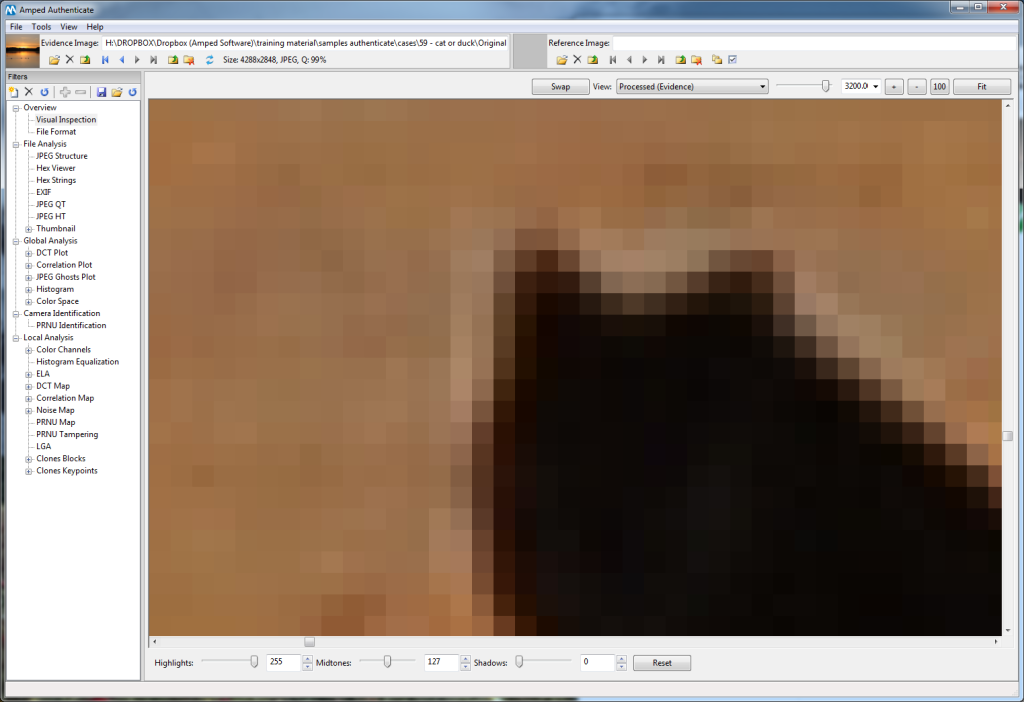

With no obvious signs of anything bizarre going on, it’s time to start interrogating the digital file. I have chosen DSC_8128, the last one in the series of three, as this was the file chosen originally and placed on-line.

Using Amped Authenticate, I am able to conduct a visual inspection of the file and examine an overview of the file format.

The most important part in this initial overview is to compare the image against the others presented, ensuring that certain parameters are identical. Again, nothing untoward was forthcoming, with an overview of the EXIF data (Exchangeable Image File) producing information that all appeared normal. On David’s image, posted online, he had placed on the date 7th June 2014, and this was all present within the EXIF fields.

The time the pictures were taken is also present within this data. An interesting note is that they all detail 21:09:06. Upon reviewing further EXIF data, it can be seen that image 8126 had an exposure time of 1/240, 8127 used 1/640 and our main image of question had 1/160. We know from our original visual comparison that they were all very slightly different, and now we know that Exposure times had been changed on each one. Why does the time all suggest they were taken in the same second?

How could he have taken three different images, with three different settings, in the same second?

Modern DSLR Cameras have a function called Bracketing. This enables the camera to take a series of images with different settings. It’s still only one click of the shutter-release but the camera is able to take different images with different settings.

The use of multi-shot function is actually documented in an EXIF field named ‘MakerNotes’. The result being the Value: “Continuous, Exposure Bracketing”.

Now that we have identified this information as being of no concern, it’s time to move on.

With Amped Authenticate, I have used DSC_8128 as the ‘Evidence Image’. During initial analysis I have compared this image with the other two files supplied. This is fine to compare standard settings, in order to identify any obvious differences but, in order to continue with our authentication analysis, we need to verify that the image is camera-original.

What does this mean?

During the initial file analysis, it is very easy to see the EXIF data. The fields presented to us all depend on the writer used to compile that image. A lot of this data can be manually edited, either at the time of writing or afterwards. For instance, if Adobe Photoshop had been used to write the file, this would be detailed in the image. Also, the fields themselves may be different, as the writing program can chose what fields to present. It is important to remember though, EXIF information can be edited to display incorrect data.

The Evidence Image EXIF information detailed what was used to write this file, revealing a NIKON D300. In order to continue then, I must compare the image with another image taken by the same camera model.

Amped Authenticate makes this a relatively simple process – you don’t have to go down to your local camera store, or put out a request on craigslist!

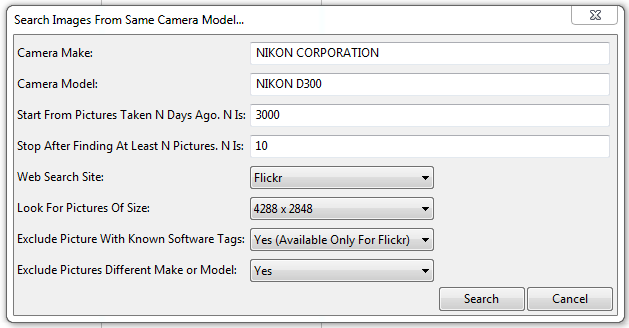

Within the tools menu, I have an option to search online. It automatically extracts the information needed for the search from the initial file analysis. It takes around 1 minute to scan 1000 files.

The amount of files to locate can be edited, so after changing my parameters to 50 I trawled Flickr, and within 20 minutes I had my files. After previewing these, and Amped Authenticate has a Batch File Format Analysis option to make things easier, I found that the majority were using a new internal software version.

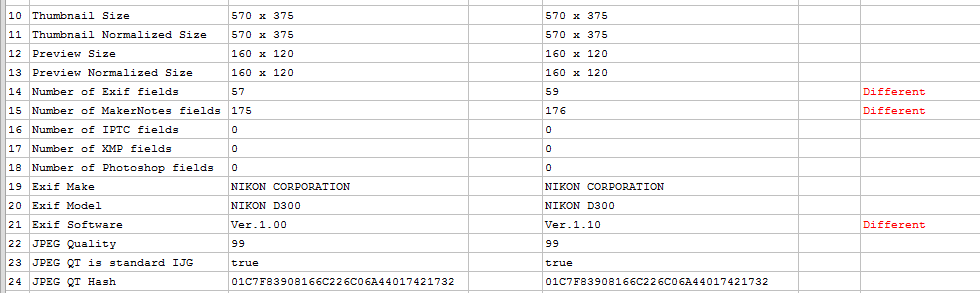

In the comparison table above, the evidence image is shown in the first columns, with field name and then the value. Adjacent to that, is one of the images located online. Most images located were using Software Ver.1.10 and as can be seen in Row 21, the evidence image reported an earlier version. The use of an older version may explain why there are a slightly lower number of Exif fields as well. But, could it be that these fields have been removed in order to hide something?

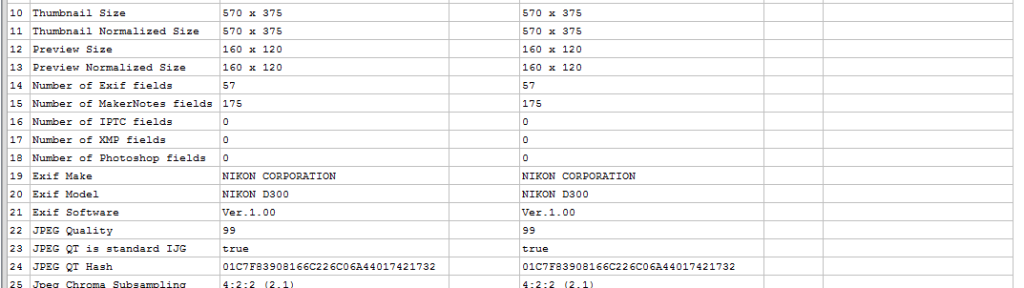

A further trawl, after increasing my search parameters, located an image with the same Software version and parameters, thereby producing no red difference notifications.

The values shown in Line 24 though are important. These matching values are of the Quantization Table.

Quantization is required during the compression process. With all the thousands of devices that can make a jpeg, there are again, thousands of different tables. Amped Authenticate’s internal database of values detects this hash value as being used by a number of professional digital cameras, including the Nikon D300.

Moving onto analyzing the Huffman Table, this presented a full match between the evidence image and the sourced D300 images. Although this doesn’t prove without doubt that the image has not been manipulated, the fact that other images taken with the same make and model of camera report the same values, does mean that a higher level of alteration would have had to occur.

The last part on the initial file analysis, displays the embedded thumbnail or preview image. These images should be a lower resolution version of the Evidence Image and they should also display in the same format.

The black padding along the top and bottom of the image was the same in all of the others supplied and also could be seen in other D300 images sourced from Flickr. All of the standard parameters matched, with no abnormal differences detected.

The analysis and comparisons completed so far have been on the Metadata and embedded images. But what if this metadata had been falsified in order to hide image editing?

The first stage of the Global Analysis looks at identifying re-compression by analyzing the Discrete Cosine Transform coefficients. This is a powerful tool that may assist to identify if an image has been further compressed. A similar tool identifies geometric transformations such as rotating and re-scaling.

By analyzing the Evidence Image and comparing this to the one we know was edited, (the one that had the text overlay and its exposure increased), there was nothing to identify any manipulation at this level. I carried out further tests on the color presentation and spread, but again found no evidence of any irregularities.

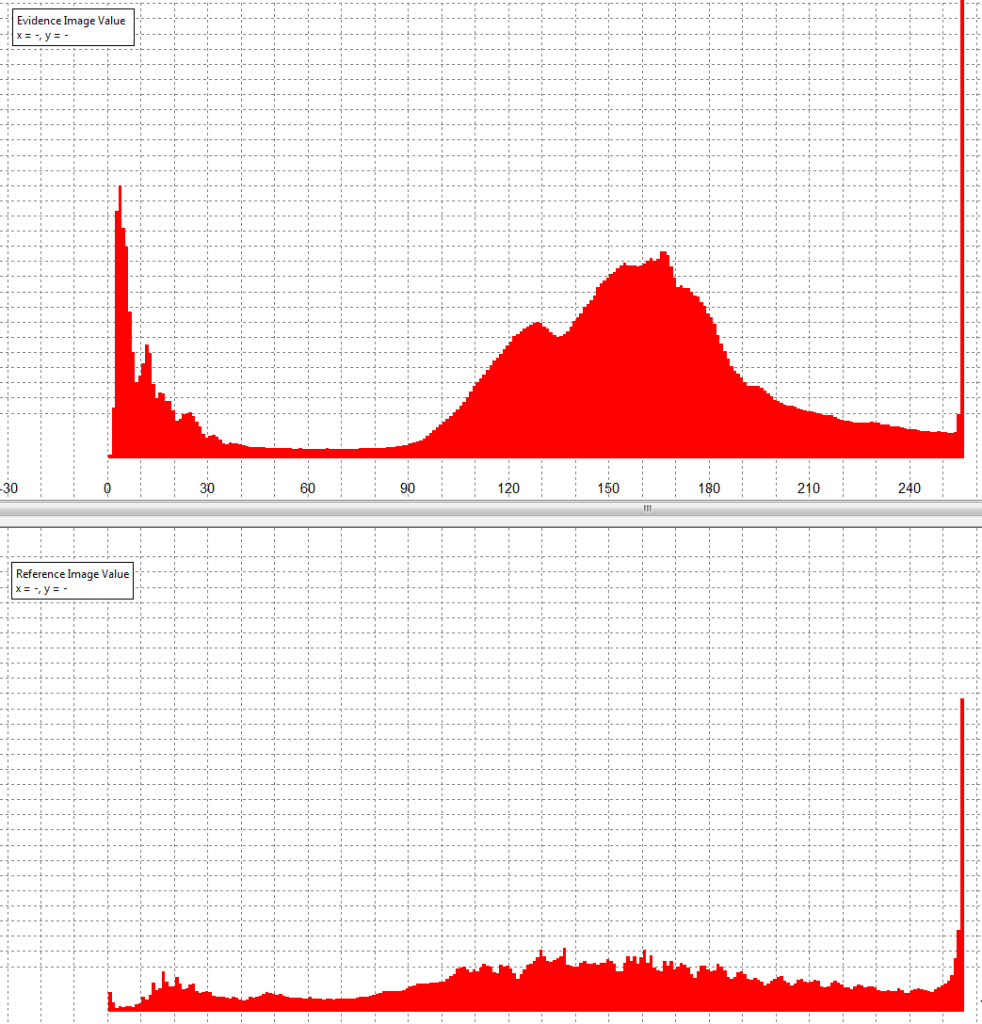

On the bottom of the graph seen above, we have the image known to have been edited. On the top we have the ‘Original’ Evidence Image.

An irregular pattern in the top image could suggest image manipulation, and a flattened pattern, similar to the graph displayed for the reference image, could suggest further post processing.

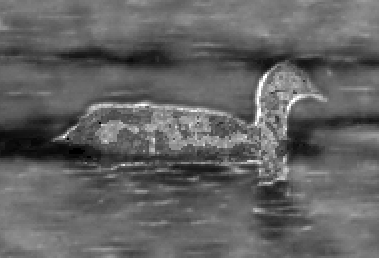

I’m going to move on now to looking at the specific region of interest, referred to as Local Analysis. After all, the cat is pretty localized. However, it’s important to remember that we have to compare our mystery animal with something. A comparison can then be made to ensure that this object displays the same artifacts and visual presentation as other objects in the scene.

Notice in the two images above how the light direction causes a kind of edge, and the side facing the camera has distinct luminance variations. Areas of the water and treeline were also analysed in the various channels in order to identify manipulation artifacts.

Another method that a lot of people may have heard of, is Error Level Analysis, or ELA. Basically this looks for small changes in the compression levels of different parts of an image. If an object had been copied into an image, and then the image re-saved, the differences would be highlighted.

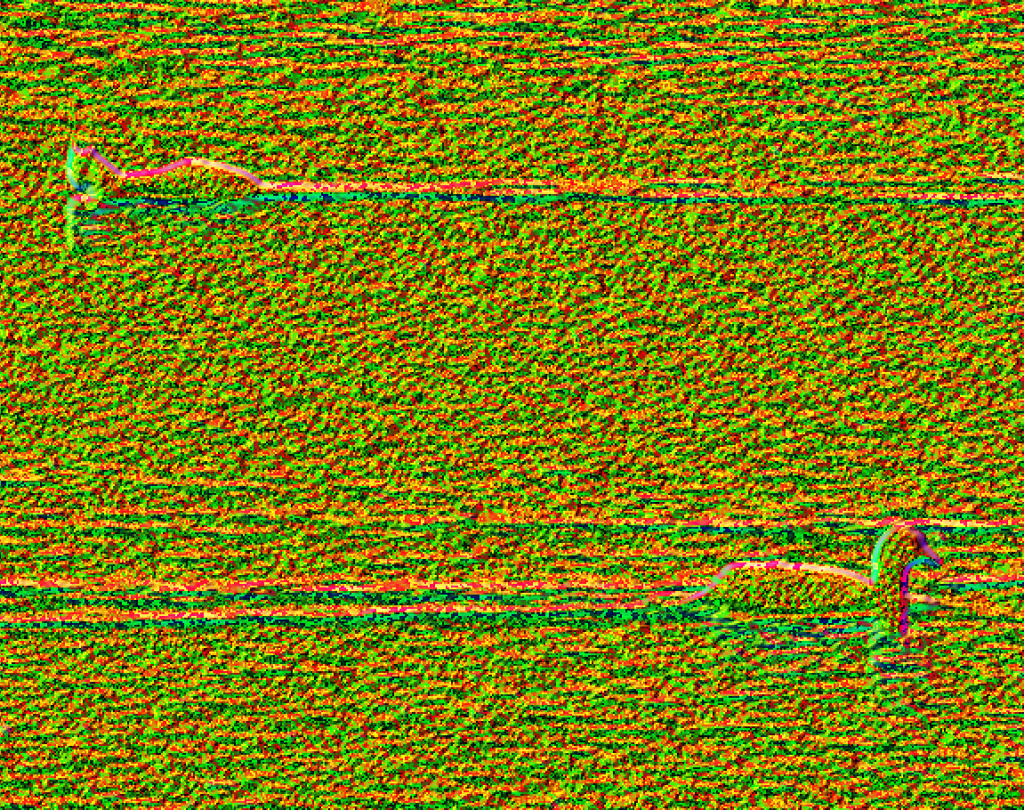

Amped Authenticate has a very natural progression through the various techniques to identify anomalies in an image. Even though each filter is parsed quickly by the software, the process of conducting and analyzing each result should not be done hastily. After completing other tests, I moved onto Luminance Gradient Analysis. This is ideal for scenes such as the one being analysed here, due to it detecting the changes in direction, and intensity, of light.

The light direction can be seen on the duck in the foreground, and also on the water, as the animal has swam along. The pixels have a pink / purple coloring. This can be compared with the pattern presented by our mystery animal. Of particular interest is the amount of area that is capturing the light, in comparison to the duck.

If you remember, there is no point attempting to enhance an image, if it’s been fabricated. If it’s been fabricated, the image is not authentic.

After analyzing the file, the metadata, the image and then the object, I can find no evidence of manipulation or fabrication.

As an analyst, I am unable to prove with certainty that the image is authentic. If I was in a courtroom, I would be able to state that I was unable to find any data proving that this was not an original. What does that mean exactly? Well, it means that it is probably an original… even though it cannot be stated scientifically in 100% sure terms.

That is the crux of Image Authentication. I have to assume that the image is not authentic, and I have to find evidence proving that fact.

Could this be a radio control boat with a cat body on top – of course it could be.

Could this be a big grebe, swan, or larger duck, and its body position along with the way it’s been captured means that we immediately interpret it as a cat like creature – of course.

Finally then, it comes down to our own interpretation. To conclude, are there opportunities for enhancement? There’s not a lot really. Even though the images are of a very high resolution, the area covered by the object is very small. The lighting also means that the majority of the object’s surface area has little light values to identify detail.

I did say that would be my conclusion but, the final word must now go to my 10 year old daughter…

“It’s a cat Dad, anybody can see it’s a cat!”

New Amped Authenticate Filters

Keep watching AMPED’s social media feeds for further news and blog posts on some new and progressive filters for Amped Authenticate, designed to identify re-compression in JPEG images.

Subscribe and Follow Us using the links at the top of the page.